Introduction

Data orchestration is the intelligent, governed, and agile flow of data across systems, supporting real-time, batch, and streaming processes for unified customer engagement.

Effective data orchestration delivers seamless, relevant, and real-time experiences across all customer touchpoints. This process involves much more than just the movement of data between various systems; it is an intelligent, governed, and agile flow of information, empowering both business and technical teams to act with confidence and precision.

Integrating disparate data sources allows organizations to create a unified view of their customers, allowing for personalized interactions targeting individual preferences and behaviors. Effective data orchestration enhances decision-making, ensuring that teams have access to the right information at the right time. This capability streamlines operations and fosters innovation, as teams can leverage data-driven insights to develop new strategies and solutions that meet the dynamic needs of the market, driving loyalty and growth.

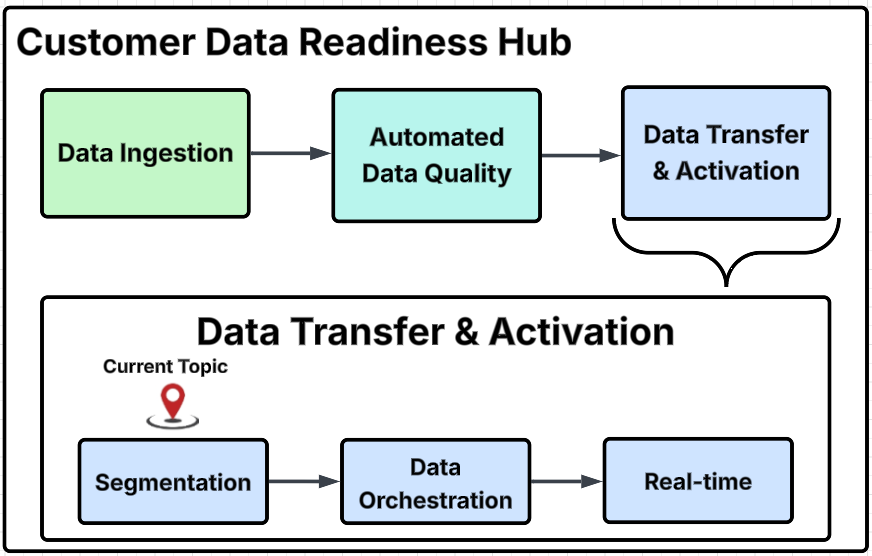

Customer Data Readiness Hub diagram

This diagram outlines the primary process steps within the Customer Data Readiness Hub, provides a detailed breakdown of specific activities within each major group, and identifies the particular step addressed on this page with the "Current Topic" marker.

Core principles of data orchestration

Unified flow of data

Unifying data from various sources, whether they are batch processes, streaming data, or real-time APIs, into a cohesive and orchestrated pipeline guarantees that every interaction is grounded in the same reliable and consistent information.

To achieve this, Redpoint focuses on several key strategies:

-

Breaking down silos: Redpoint dismantles barriers between cloud-based, on-premise, and hybrid environments to foster an integrated approach to data management that enhances accessibility and usability across different platforms.

-

Maintaining synchronization: Redpoint continuously synchronizes between operational systems, analytical frameworks, and engagement platforms to allow organizations to operate efficiently and make informed decisions based on real-time data insights.

-

Harmonizing data-in-motion and data-at-rest: Redpoint prioritizes the alignment of data that is actively being processed (data-in-motion) with data that is stored and accessible (data-at-rest). This harmonization creates a seamless flow of information, allowing businesses to leverage their data effectively for various applications.

Through these efforts, Redpoint enhances data accessibility and empowers organizations to derive valuable insights, drive innovation, and improve overall operational efficiency.

Governance and transparency

Effective orchestration is only truly valuable when it is transparent and governed. Redpoint empowers organizations by providing them with the ability to see the complete lineage of their data flows. This visibility allows businesses to understand how data moves through their systems, ensuring that every step is accounted for and compliant with necessary regulations.

Our approach includes embedded rules that prioritize security, compliance, and auditability, making it easier for organizations to adhere to industry standards and best practices.

Key features of our orchestration solution include:

-

Policy-driven orchestration ensures that access to data is managed based on defined roles within the organization. By implementing role-based access controls, we help organizations maintain security while allowing users to access the information they need.

-

Metadata tracking capabilities support governance and regulatory requirements. This tracking aids in compliance and enhances the overall understanding of data usage and lineage informing business decisions.

-

Operational monitoring and logging allows organizations to quickly identify and address any issues that may arise, ensuring that data flows smoothly and efficiently.

Redpoint's orchestration capabilities help organizations navigate the complexities of data management with confidence, ensuring that they remain compliant and secure while maximizing the value of their data assets.

Automation with human oversight

Automation enhances both speed and scalability within organizations. However, automation must be complemented by human oversight to ensure that all efforts align seamlessly with overarching business goals. Redpoint’s robust automation capabilities include triggers, schedules, retries, and error handling to streamline processes and empower practitioners to intervene and make necessary adjustments as needed.

Redpoint's configurable scheduling and triggering logic allows businesses to tailor their automation processes to meet specific operational needs, ensuring that tasks are executed at the appropriate times and under the right conditions. Additionally, the intelligent retry mechanisms, along with alerting and failover handling, provide a safety net that minimizes disruptions and maintains continuity.

Moreover, Redpoint incorporates human-in-the-loop checkpoints for critical workflows. These checkpoints maintain quality and compliance, as they allow human practitioners to review and validate automated processes at key stages. This integration of human oversight enhances the reliability of automated workflows and fosters a culture of collaboration between technology and human expertise, ultimately driving better outcomes for the business.

In summary, while automation enhances efficiency and scalability, combining automated capabilities with human intervention ensures alignment with business objectives and fosters sustainable growth.

Flexibility and modularity

Modularity allows each data flow to be constructed from a variety of interchangeable steps, enabling users to tailor their processes according to specific needs. By leveraging reusable templates and adhering to standardized best practices, organizations can achieve a high degree of flexibility in their operations. The ability to quickly respond to new data sources, evolving business requirements, or emerging technologies can significantly impact an organization’s success.

The orchestration framework provides several key features that enhance its effectiveness:

-

Plug-and-play orchestration components can be easily integrated into existing workflows, allowing users to customize their data pipelines without extensive reconfiguration. This saves time and reduces the complexity associated with traditional data management systems.

-

Templates and reusable patterns for common data pipelines enable organizations to streamline their data processes. This fosters consistency across different projects and accelerates the implementation of new data initiatives.

-

Support for both IT-driven and marketer-driven use cases ensures that all users can effectively engage with the data orchestration process, regardless of their technical expertise.

Redpoint’s orchestration philosophy emphasizes modularity and equips organizations with the tools necessary to thrive. By focusing on flexibility, reusability, and inclusivity, Redpoint empowers businesses to adapt swiftly to changes and seize new opportunities as they arise.

Real-time responsiveness

Redpoint’s real-time responsiveness allows organizations to harness customer signals—such as events, behaviors, and transactions—to trigger orchestrated flows instantaneously. As a result, brands can act decisively at what is often referred to as the “moment of truth,” ensuring that they meet customer needs and expectations without delay.

Key components of this advanced orchestration include:

-

Event-driven orchestration allows businesses to respond dynamically to customer interactions, ensuring that every engagement is timely and relevant.

-

Real-time streaming pipelines facilitate the continuous flow of data, enabling organizations to process and analyze customer signals as they occur, rather than relying on outdated batch processing methods. This ensures that the most up-to-date data is the source of truth.

-

Integration with engagement and decisioning layers ensures that orchestrated flows are efficient and strategically aligned with business objectives.

The shift towards modern orchestration changes how brands interact with their customers. By leveraging real-time data and event-driven strategies, organizations can create more personalized and impactful experiences, ultimately driving customer satisfaction and loyalty.

Benefits to the enterprise

-

Agility: The ability to rapidly deploy new data flows, without the need to wait for extensive custom development processes, allows teams to respond quickly to changing market demands and customer needs, ensuring that they remain competitive and relevant.

-

Accuracy: Consistency in data management reduces errors and duplication. By implementing governed pipelines, organizations can ensure that their data is reliable and accurate. This enhances the quality of insights derived from the data and fosters trust among stakeholders who rely on this information for decision-making.

-

Customer centricity: A unified and orchestrated approach to data enables organizations to personalize their offerings and engage with customers in a more contextual manner. By leveraging comprehensive data insights, businesses can tailor their interactions, ensuring that they meet the specific needs and preferences of their customers. This level of personalization can significantly enhance customer satisfaction and loyalty.

-

Efficiency: Automation reduces operational overhead. By streamlining processes and minimizing manual intervention, organizations can accelerate their time-to-market for new products and services. This efficiency saves resources and allows teams to focus on strategic initiatives that drive growth and innovation.

-

Trust: Transparency and governance in data management build confidence in data-driven decision-making. When stakeholders can see how data is collected, processed, and used, they are more likely to trust the insights generated. This trust fosters a culture of data-driven decision-making across the organization, ultimately leading to better business outcomes.

90–120 day operationalization activities

Implementing a data orchestration process tactically involves a structured, phased approach over the first 90–120 days, with clear roles and responsibilities and a focus on operationalization. Here’s what this typically looks like:

1. Discovery & success criteria

In the initial phase of the project, define clear and actionable use cases that will guide your efforts. For instance, explore cross-channel frequency control to ensure that your messaging is consistent and not overwhelming for your audience. Additionally, address email de-duplication to enhance the efficiency of communications and avoid redundancy. By identifying these specific use cases, you can better tailor your strategies to meet the needs of your stakeholders. Furthermore, pinpointing the relevant data domains and establishing key performance indicators (KPIs) provides benchmarks for measuring the success of your orchestration efforts. These KPIs help you evaluate progress and ensure that you are on track to achieve your goals.

2. Data profiling & quality plan

The next step involves a thorough assessment of your current data quality. This assessment identifies and closes critical gaps that may exist, such as issues related to standardization and the absence of required keys. Addressing these gaps significantly improves the reliability of the data. Establishing a baseline for ongoing measurement will allow you to monitor your data quality over time, ensuring that you maintain high standards and make necessary adjustments as you progress. This proactive approach to data profiling will ultimately enhance your decision-making processes and the effectiveness of your orchestration.

3. Rule & workflow design

In this phase, focus on drafting the orchestration logic that will govern your processes. This includes defining match hierarchies, setting thresholds, and establishing survivorship rules that will dictate how data is managed and prioritized. Additionally, design modular and reusable pipeline templates that can be applied to common data flows. This modularity will streamline your workflows and facilitate scalability as data needs evolve. By creating a robust framework for orchestration logic, you can ensure that your operations are efficient and adaptable.

4. Back-testing & tuning

To validate orchestration flows, conduct back-testing using historical data. This simulation will allow you to analyze the effectiveness of your proposed strategies in a controlled environment. After running these simulations, review the results with key business stakeholders and data stewards. Their insights will be invaluable in identifying areas for improvement and making necessary adjustments to your rules. This iterative process of review and tuning will help you refine your approach and enhance the overall effectiveness of your orchestration.

5. User acceptance testing (UAT) & playbooks

User Acceptance Testing (UAT) ensures that your orchestration meets the needs of end-users. During this phase, validate the end-to-end orchestration process, paying particular attention to error handling and lineage tracking. Develop comprehensive playbooks for data stewards, which will include guidelines for tasks such as merging and un-merging data, as well as maintaining audit trails. These playbooks will serve as essential resources for the team, ensuring that everyone is equipped to manage data effectively and respond to any issues that may arise.

6. Rollout & monitoring

The final phase involves deploying the orchestrated pipelines to production. This rollout is a significant milestone: set up dashboards that provide observability and real-time monitoring of your processes. These dashboards will enable you to track performance and quickly identify any anomalies. Additionally, establish quarterly reviews to facilitate continuous improvement and recalibration of your strategies. This ongoing evaluation will ensure that you remain responsive to changing needs and can adapt your orchestration efforts accordingly. Committing to a cycle of monitoring and improvement enhances the effectiveness and reliability of your data orchestration initiatives.

Best practices for operationalization

-

Policy-driven orchestration: Implementing a robust framework for policy-driven orchestration ensures that access controls are effectively managed. Use role-based access controls to tailor permissions to specific user roles, thereby enhancing security and compliance. Additionally, establish versioned rules to better track and manage changes over time, ensuring that all stakeholders are aware of the current policies in effect.

-

Automation with human oversight: Integrate automation into workflows while maintaining a balance between automation and human oversight. By implementing triggers, schedules, and retries, organizations can streamline processes while minimizing the risk of errors. Furthermore, incorporating human-in-the-loop checkpoints for critical workflows ensures that there is always a human element involved in decision-making, allowing for timely interventions when necessary.

-

Governance: Effective governance involves maintaining comprehensive metadata tracking to provide visibility into data usage and lineage. Operational monitoring identifies potential issues before they escalate, while detailed logging ensures that all actions are recorded for accountability and audit purposes. By prioritizing governance, organizations can uphold data integrity and compliance with regulatory requirements.

-

Flexibility: Leveraging plug-and-play components and templates can significantly accelerate the deployment of new data flows. This adaptability allows organizations to respond quickly to evolving business needs and integrate new technologies with ease.

-

Continuous improvement: To ensure ongoing success, commit to continuous improvement practices. Schedule regular reviews of key performance indicators (KPIs) and lineage dashboards to assess the effectiveness of strategies and identify areas for enhancement. Additionally, retiring dormant feeds helps streamline operations and focus resources on more impactful initiatives. By embracing a mindset of continuous improvement, organizations can drive innovation and achieve sustained growth.

This approach ensures that data orchestration technically sound and also governed, transparent, and aligned with business objectives—empowering both technical and business teams to deliver reliable, real-time customer experiences.

Conclusion

Redpoint’s philosophy on data orchestration emphasizes the importance of enabling organizations to provide real-time, relevant, and reliable customer experiences. This is achieved by ensuring that data is not only fluid and governed but also actionable. Data orchestration should not be viewed merely as a back-office function; rather, it should be recognized as a strategic capability that plays a critical role in unifying the customer journey.

By integrating data orchestration into the core of business operations, organizations can empower their teams to make informed decisions that directly impact customer interactions. This empowerment leads to improved collaboration across departments, fostering a culture of data-driven decision-making. Moreover, by streamlining data processes, businesses can enhance their ability to respond swiftly to customer needs and market changes.

The ultimate goal is to drive measurable outcomes that reflect positively on the organization’s performance. This involves optimizing the use of data and ensuring that every touchpoint in the customer journey is enriched with relevant insights. In doing so, organizations can create personalized experiences that resonate with customers, thereby building loyalty and trust.

In summary, Redpoint’s approach to data orchestration is about transforming data into a strategic asset that fuels growth and innovation. By prioritizing real-time data access and actionable insights, organizations can navigate the complexities of the modern marketplace and deliver exceptional customer experiences.